This is a 30-minute talk I did at Blueprint LDN about Resilience Engineering and its relation to DevOps, psychological safety, complex systems, and introducing some of the main topics and key people in the field.

Psychological safety, homogeneity and diversity in group contexts.

Psychological safety stems in large part from a shared set of group values, norms, and experiences. When we’re in a group that shares the same perspectives, life experiences, language and culture, we feel safer and more able to listen, contribute, admit mistakes and challenge the ideas of others.

Think about tribalism – which is sometimes framed as a negative, but can be extremely powerful for good and for bad. Football fans who all passionately support the same team, are an incredible support group and safe space for many people. But of course there can be a dark side to the same phenomenon, as manifested by football hooliganism and violence, whereby outgroup members are seen as “The Other” and thus either a threat, or a group to be dominated or beaten.

Being part of a “tribe”, a group of similar people (similar in whatever way brings that tribe together, whether it’s a football club or a sexuality) brings with it a sense of belonging and safety.

Groups of people who are more similar, who have similar life experiences, language, values, and expectations (of each other and of themselves) will find it easier to attain higher levels of psychological safety because they understand more implicitly whats expected, what behaviour is accepted and “good”, and feel less fear from other members of the group

This is why it’s useful to have “safe spaces” where people, for example, LGBTQ+, or people of colour, can talk openly about the things they’re dealing with. Having people in the group without that life experience can make psychological safety harder to attain – but not impossible. This applies not just to those who we’d typically view as disadvantaged or disenfranchised in some way – we are all somewhere on the myriad spectrum of intersectionality.

For example, I, as a white, english speaking man, may feel very safe in a very heterogenous group of different genders, ethnicities and languages by dint of the privilege as a white man that I’m lucky to benefit from – but if I was in a group that also consists of various Elon Musks and Jeff Bezos characters, I’m probably going to feel very psychologically unsafe, and I’d be highly unlikely to speak up, admit mistakes, and certainly not challenge the ideas of others in the group.

Creating a homogenous group, whether it’s of gender, sexuality, class, colour or background is a shortcut, a cheat code, for psychological safety.

But ultimately, homogeneous groups will never attain the performance level that a highly diverse, heterogenous group will. Diversity brings different experiences, knowledge, insights, that increase the ultimate capability of the group to do the thing they’re trying to do – whether that’s build a product or run a country.

So, whilst a homogenous group can be an effective way to increase, quickly, the psychological safety of a group, it is just a shortcut. It’s not a reason to suggest that we should create homogenous teams – and certainly not an argument to segregate society, if one was that way inclined.

Diversity arguably does make psychological safety harder to attain, because there isn’t as much of a set of inherent shared group norms, beliefs and experiences. However, that “weakness” is also a strength. By working on creating those group norms, values and behaviours, a more diverse group can reach far higher potential than a less diverse, more homogenous group – through exploiting that wide spectrum of experiences, knowledge and insights.

Resilience Engineering, DevOps, and Psychological Safety – resources

With thanks to Liam Gulliver and the folks at DevOps Notts, I gave a talk recently on Resilience Engineering, DevOps, and Psychological Safety.

It’s pretty content-rich, and here are all the resources I referenced in the talk, along with the talk itself, and the slide deck. Please get in touch if you would like to discuss anything mentioned, or you have a meetup or conference that you’d like me to contribute to!

Here’s a psychological safety practice playbook for teams and people.

https://openpracticelibrary.com/

Resilience Engineering and DevOps slide deck

https://docs.google.com/presentation/d/1VrGl8WkmLn_gZzHGKowQRonT_V2nqTsAZbVbBP_5bmU/edit?usp=sharing

Resilience engineering – Where do I start?

Turn the ship around by David Marquet

Lorin Hochstein and Resilience Engineering fundamentals

https://github.com/lorin/resilience-engineering/blob/master/intro.md

Scott Sagan, The Limits of Safety:

“The Limits of Safety: Organizations, Accidents, and Nuclear Weapons”, Scott D. Sagan, Princeton University Press, 1993.

Sidney Dekker: “The Field Guide To Understanding Human Error: Sidney Dekker, 2014

John Allspaw: “Resilience Engineering: The What and How”, DevOpsDays 2019.

https://devopsdays.org/events/2019-washington-dc/program/john-allspaw/

Erik Hollnagel: Resilience Engineering

https://erikhollnagel.com/ideas/resilience-engineering.html

https://www.cognitive-edge.com/

Jabe Bloom, The Three Economies

http://blog.jabebloom.com/2020/03/04/the-three-economies-an-introduction/

Tarcisio Abreu Saurin – Resilience requires Slack

Resilience engineering and DevOps – a deeper dive

Symposium with John Willis, Gene Kim, Dr Sidney Dekker, Dr Steven Pear, and Dr Richard Cook: Safety Culture, Lean, and DevOps

Approaches for resilience and antifragility in collaborative business ecosystems: Javaneh Ramezani Luis, M. Camarinha-Matos:

https://www.sciencedirect.com/science/article/pii/S0040162519304494

Garvin, D.A., Edmondson, A.C. and Gino, F., 2008. Is yours a learning organization?. Harvard business review, 86(3), p.109.

Psychological safety: Edmondson, A., 1999. Psychological safety and learning behavior in work teams. Administrative science quarterly, 44(2), pp.350-383.

The four stages of psychological safety, Timothy R. Clarke (2020)

Measuring psychological safety:

And of course the youtube video of the talk:

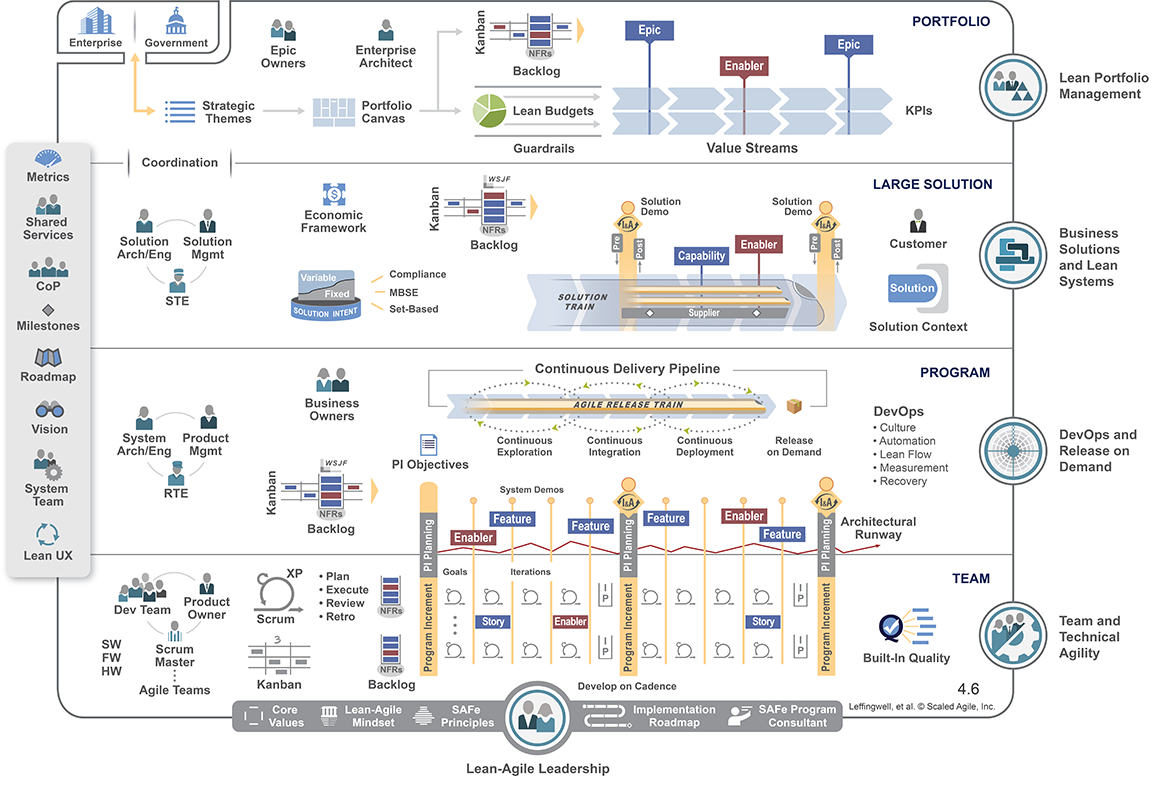

A Critique of SAFe – The Scaled Agile Framework

This is a critique of the Scaled Agile Framework (SAFe).

It’s a critique, so it’s pretty negative! There are some benefits of using SAFe in large organisations, some very good use cases for full or partial adoption as long as it’s considered part of a journey, as long as your eyes are open to the problems with SAFe, and your reasons for adopting it are sound.

However, here I’m describing ten key points emphasising why it’s not an appropriate approach for most organisations looking to scale software delivery.

I’m really interested in your opinion, so please do get in touch if you wish to make a comment or suggestion. Ultimately, we must remember to scale down the problem before scaling up the solution.

In Summary: Problems with SAFe approaches:

- SAFe encourages normalisation of batch sizing across teams, incentivises increasing task sizes, and fundamentally misappropriates what story points are for.

- SAFe can cause increased localised technical debt.

- SAFe creates conflicts with support, operational and SRE functions.

- SAFe decreases inter-team (particularly value stream) collaboration.

- SAFe uses fallacies in estimation.

- SAFe decreases the agile focus on value in favour of “what management wants”.

- SAFe decreases the utility of, and the focus on, retrospectives.

- SAFe is not Agile – it encourages top-down, large-batch planning rather than small, iterative, feedback loops.

- SAFe is framed as a solution, rather than a stage of a journey.

- SAFe scales up the solution rather than scaling down the problem.

11 – (bonus point, thanks to Mathew Skelton) – SAFe encourages temporal coupling of teams.

In Detail: a critique of the Scaled Agile Framework:

1 – Nothing in agile suggests that we need to, or even *should* measure work units (i.e. story points) in uniform manners across teams. Story points exist to help the people *doing* the work break things down into optimum batch size, which makes deliverables achievable, less complex, and facilities flow. Indeed, SAFe actually encourages larger batch sizes through front-loaded planning, not smaller sizes planned through more iterative methods.

SAFe tries to normalise story points across teams for various reasons, but there is often a strong desire to measure and compare the delivery of teams and people. This is not what story points are for. Story points do not exist to measure how “productive” developers are.

2 – Technical debt tends to increase in SAFe organisations because the prioritisation of dealing with it is raised to a management level rather than team level. This is counter-productive for technical debt that originates at the team level (which most of it does). Management will tend to prioritise features and functions, delaying the pay-back of localised technical debt, and resulting in slower, higher risk, more brittle systems.

3 – If SAFe is applied to more operational functions, such as technology support, operations, or SRE, conflicts between delivery and support functions arise, because supporting teams typically need to work either responsively, dealing with issues as they arise, or on very short cycles – not the Programme Increment cycle time imposed by SAFe.

4 – Due to the focus on deliverables and accountability through project or product managers, teams may be discouraged from assisting each other, as they are measured by their own delivery and productivity: how much they assist other teams is rarely valued.

5 – The concept of “ideal dev days” is often used for estimating in SAFe. Everyone else knows that ideal dev days are a fallacy. Instead, look at past similar deliverables, and see how long they took. This is a much more predictive metric, and is less susceptible to optimism bias or wanting to please the boss.

6 – The concept of “value” often breaks down in SAFe, through a focus on volume of delivery and meeting the (often arbitrary) deadlines imposed by management in PI planning. As a result, what end-users actually want is often ignored in favour of what management wants.

7 – PI planning includes a small element of retrospective activity, but it’s too little, too late. The retrospective feedback loops need to be short and light, not tagged on to PI planning as an afterthought. Here’s a comprehensive guide to retrospectives that also covers some really useful suggestions for running them with remote and distributed teams.

8 – Agile was created as a response to frustrations felt across the industry from heavyweight, top-down project management methodology that was killing the sector. Trying to scale Agile up by applying heavyweight, top-down methodologies is antithetical.

9 – Some SAFe practitioners describe it as a transition stage, a process through which organisations can achieve increased capability at scale. I would agree: if an organisation feels the need to adopt SAFe, it should be as training wheels, a structure through which great capabilities can be built, before throwing off the shackles of a rigid, top-down framework. If it was really true that SAFe is a transitionary framework, why does the SAFe model not include anything about the transition away from it?

10 – In reality, most organisations don’t need SAFe. They’re not so big that they need such a big solution. SAFe is a comfort blanket for organisations used to traditional, slow, heavyweight, command-control structures. Your projects and products actually aren’t that big – and if they are, then that’s the problem, not the management process.

Fundamentally, SAFE tends to ignore, or encourages management to ignore the possibility that those closest to the work might be the best equipped to make decisions about it. Scale the work down, not the process up. SAFe fits the delivery model to the organisational structure, rather than forcing the organisation to adopt new ways.

Here’s a bonus point 11, thanks to Matt Skelton of Team Topologies: SAFe, via the enforced Program Increment approach, encourages (or very possibly forces) at least a temporal coupling of teams that isn’t warranted. In fact, any sort of forced coupling is an antipattern for a fast flow of change, and via Conway’s Law, probably introduces architectural coupling too (which is bad). Given that SAFe adopts the PI as the core foundation of the approach, it’s unlikely that any SAFe practitioner would suggest dropping PI when the teams are mature enough to do so… or would they?

2023 update: Here’s a along with case studies and expert commentary from practitioners and researchers alike.

Resilience Engineering and DevOps – A Deeper Dive

[This is a work in progress. If you spot an error, or would like to contribute, please get in touch]

The term “Resilience Engineering” is appearing more frequently in the DevOps domain, field of physical safety, and other industries, but there exists some argument about what it really means. That disagreement doesn’t seem to occur in those domains where Resilience Engineering has been more prevalent and applied for some time now, such as healthcare and aviation. Resilience Engineering is an academic field of study and practice in its own right. There is even a Resilience Engineering Association.

Resilience Engineering is a multidisciplinary field associated with safety science, complexity, human factors and associated domains that focuses on understanding how complex adaptive systems cope with, and learn from, surprise.

It addresses human factors, ergonomics, complexity, non-linearity, inter-dependencies, emergence, formal and informal social structures, threats and opportunities. A common refrain in the field of resilience engineering is “there is no root cause“, and blaming incidents on “human error” is also known to be counterproductive, as Sidney Dekker explains so eloquently in “The Field Guide To Understanding Human Error”.

Resilience engineering is “The intrinsic ability of a system to adjust its functioning prior to, during, or following changes and disturbances, so that it can sustain required operations under both expected and unexpected conditions.” Prof Erik Hollnagel

It is the “sustained adaptive capacity” of a system, organisation, or community.

Resilience engineering has the word “engineering” in, which makes us typically think of machines, structures, or code, and this is maybe a little misleading. Instead, maybe try to think about engineering being the process of response, creation and change.

Systems

Resilience Engineering also refers to “systems”, which might also lead you down a certain mental path of mechanical or digital systems. Widen your concept of systems from software and machines, to organisations, societies, ecosystems, even solar systems. They’re all systems in the broader sense.

Resilience engineering refers in particular to complex systems, and typically, complex systems involve people. Human beings like you and I (I don’t wish to be presumptive but I’m assuming that you’re a human reading this).

Consider Dave Snowden’s Cynefin framework:

Systems in an Obvious state are fairly easy to deal with. There are no unknowns – they’re fixed and repeatable in nature, and the same process achieves the same result each time, so that we humans can use things like Standard Operating Procedures to work with them.

Systems in a Complicated state are large, usually too large for us humans to hold in our heads in their entirety, but are finite and have fixed rules. They possess known unknowns – by which we mean that you can find the answer if you know where to look. A modern motorcar, or a game of chess, are complicated – but possess fixed rules that do not change. With expertise and good practice, such as employed by surgeons or engineers or chess players, we can work with systems in complicated states.

Systems in a Complex state possess unknown-unknowns, and include realms such as battlefields, ecosystems, organisations and teams, or humans themselves. The practice in complex systems is probe, sense, and respond. Complexity resists reductionist attempts at determining cause and effect because the rules are not fixed, therefore the effects of changes can themselves change over time, and even the attempt of measuring or sensing in a complex system can affect the system. When working with complex states, feedback loops that facilitate continuous learning about the changing system are crucial.

Systems in a Chaotic state are impossible to predict. Examples include emergency departments or crisis situations. There are no real rules to speak of, even ones that change. In these cases, acting first is necessary. Communication is rapid, and top-down or broadcast, because there is no time, or indeed any use, for debate.

Resilience

As Erik Hollnagel has said repeatedly since Resilience Engineering began (Hollnagel & Woods, 2006), resilience is about what a system can do — including its capacity:

- to anticipate — seeing developing signs of trouble ahead to begin to adapt early and reduce the risk of decompensation

- to synchronize — adjusting how different roles at different levels coordinate their activities to keep pace with tempo of events and reduce the risk of working at cross purposes

- to be ready to respond — developing deployable and mobilizable response capabilities in advance of surprises and reduce the risk of brittleness

- for proactive learning — learning about brittleness and sources of resilient performance before major collapses or accidents occur by studying how surprises are caught and resolved

(From Resilience is a Verb by David D. Woods)

| Capacity | Description |

| Anticipation | Create foresight about future operating conditions, revise models of risk |

| Readiness to respond | Maintain deployable reserve resources available to keep pace with demand |

| Synchronization | Coordinate information flows and actions across the networked system |

| Proactive learning | Search for brittleness, gaps in understanding, trade-offs, re-prioritisations |

Provan et al (2020) build upon Hollnagel’s four aspects of resilience to show that resilient people and organisations must possess a “Readiness to respond”, and states “This requires employees to have the psychological safety to apply their judgement without fear of repercussion.”

Resilience is therefore something that a system “does”, not “has”.

Systems comprise of structures, technology, rules, inputs and outputs, and most importantly, people.

“Resilience is about the creation and sustaining of various conditions that enable systems to adapt to unforeseen events. *People* are the adaptable element of those systems” – John Allspaw (@allspaw) of Adaptive Capacity Labs.

Resilience therefore is about “systems” adapting to unforeseen events, and the adaptability of people is fundamental to resilience engineering.

And if resilience is the potential to anticipate, respond, learn, and change, and people are part of the systems we’re talking about:

We need to talk about people: What makes people resilient?

Psychological safety

Psychological safety is the key fundamental aspect of groups of people (whether that group is a team, organisation, community, or nation) that facilitates performance. It is the belief, within a group, “that one will not be punished or humiliated for speaking up with ideas, questions, concerns, or mistakes.” – Edmondson, 1999.

Amy Edmondson also talks about the concept of a “Learning organisation” – essentially a complex system operating in a vastly more complex, even chaotic wider environment. In a learning organisation, employees continually create, acquire, and transfer knowledge—helping their company adapt to the un-predictable faster than rivals can. (Garvin et al, 2008)

“A resilient organisation adapts effectively to surprise.” (Lorin Hochstein, Netflix)

https://twitter.com/cyetain/status/1242926422869651458

In this sense, we can see that a “learning organisation” and a “resilient organisation” are fundamentally the same.

Learning, resilient organisations must possess psychological safety in order to respond to changes and threats. They must also have clear goals, vision, and processes and structures. According to Conways Law:

“Any organisation that designs a system (defined broadly) will produce a design whose structure is a copy of the organisation’s communication structure.”

In order for both the organisation to respond quickly to change, and for the systems that organisation has built to respond to change, the organisation must be structured in such a way that response to change is as rapid as possible. In context, this will depend significantly on the organisation itself, but fundamentally, smaller, less-tightly coupled, autonomous and expert teams will be able to respond to change faster than large, tightly-bound teams with low autonomy will. Pais and Skelton’s Team Topologies explores this in much more depth.

Engineer the conditions for resilience engineering

“Before you can engineer resilience, you must engineer the conditions in which it is possible to engineer resilience.” – Rein Henrichs (@reinH)

As we’ve seen, an essential component of learning organisations is psychological safety. Psychological safety is a necessary condition (though not sufficient) for the conditions of resilience to be created and sustained.

Therefore we must create psychological safety in our teams, our organisations, our human “systems”. Without this, we cannot engineer resilience.

We create, build, and maintain psychological safety via three core behaviours:

- Framing work as a learning problem, not an execution problem. The primary outcome should be knowing how to do it even better next time.

- Acknowledging your own fallibility. You might be an expert, but you don’t know everything, and you get things wrong – if you admit it when you do, you allow others to do the same.

- Model curiosity – ask a lot of questions. This creates a need for voice. By you asking questions, people HAVE to speak up.

Resilience engineering and psychological safety

Psychological safety enables these fundamental aspects of resilience – the sustained adaptive capacity of a team or organisation.:

- Taking risks and making changes that you don’t, or can’t, fully understand the outcomes of.

- Admitting when you made a mistake.

- Asking for help

- Contributing new ideas

- Detailed systemic cause* analysis (The ability to get detailed information about the “messy details” of work)

(*There is never a single root cause)

Let’s go back to that phrase at the start:

Sustained adaptive capacity.

What we’re trying to create is an organisation, a complex system, and sub systems (maybe including all that software we’re building) that possesses a capacity for sustained adaptation.

With DevOps we build systems that respond to demand, scale up and down, we implement redundancy, low-dependancy to allow for graceful failure, and identify and react to security threats.

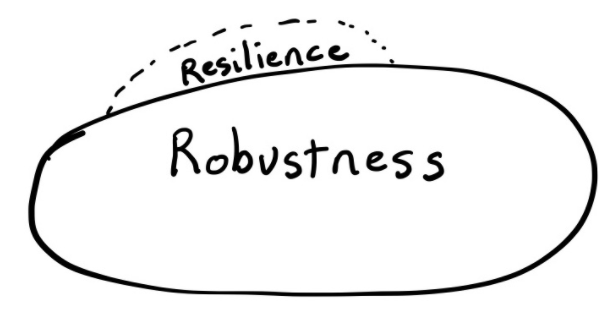

Pretty much all of these only contribute to robustness.

(David Woods, Professor, Integrated Systems Engineering Faculty, Ohio State University)

You may want to think back to the cynefin model, and think of robustness as being able to deal well with known unknowns (complicated systems), and resilience as being able to deal well with unknown unknowns (complex, even chaotic systems). Technological or DevOps practices that primarily focus on systems, such as microservices, containerisation, autoscaling, or distribution of components, build robustness, not resilience.

However, if we are to build resilience, the sustained adaptive capacity for change, we can utilise DevOps practices for our benefit. None of them, like psychological safety, are sufficient on their own, but they are necessary. Using automation to reduce the cognitive load of people is important: by reducing the extraneous cognitive load, we maximise the germane, problem solving capability of people. The provision of other tools, internal platforms, automated testing pipelines, and increasing the observability of systems increases the ability of people and teams to respond to change, and increases their sustained adaptive capacity.

If brittleness is the opposite of resilience, what does “good” resilience look like? The word “anti-fragility” appears to crop up fairly often, due to the book “Antifragile: Things that Gain from Disorder” by Nassim Taleb. What Taleb describes as antifragile, ultimately, is resilience itself.

I have my own views on this, but fundamentally I think this is the danger of academia (as in the field of resilience engineering) restricting access to knowledge. A lot of resilience engineering literature is held behind academic paywalls and journals, which most practitioners do not have access to. It should be of no huge surprise that people may reject a body of knowledge if they have no access to it.

Observability

It is absolutely crucial to be able to observe what is happening inside the systems. This refers to anything from analysing system logs to identify errors or future problems, to managing Work In Progress (WIP) to highlight bottlenecks in a process.

Too often, engineering and technology organisations look only inward, whilst many of the threats to systems are external to the system and the organisation. Observability must also concern external metrics and qualitative data: what is happening in the marketspace, the economy, and what are our competitors doing?

Resilience Engineering and DevOps

What must we do?

Create psychological safety – this means that people can ask for help, raise issues, highlight potential risks and “apply their judgement without fear of repercussion.” There’s a great piece here on psychological safety and resilience engineering.

Manage cognitive load – so people can focus on the real problems of value – such as responding to unanticipated events.

Apply DevOps practices to technology – use automation, internal platforms and observability, amongst other DevOps practices.

Increase observability and monitoring – this applies to systems (internal) and the world (external). People and systems cannot respond to a threat if they don’t see it coming.

Embed practices and expertise in component causal analysis – whether you call it a post-mortem, retrospective or debrief, build the habits and expertise to routinely examine the systemic component causes of failure. Try using Rothmans Causal Pies in your next incident review.

Run “fire drills” and disaster exercises. Make it easier for humans to deal with emergencies and unexpected events by making it habit. Increase the cognitive load available for problem solving in emergencies.

Structure the organisation in a way that facilitates adaptation and change. Consider appropriate team topologies to facilitate adaptability.

In summary

Through facilitating learning, responding, monitoring, and anticipating threats, we can create resilient organisations. DevOps and psychological safety are two important components of resilience engineering, and resilience engineering (in my opinion) is soon going to be seen as a core aspect of organisational (and digital) transformation.

References:

Conway, M. E. (1968) How Do Committees Invent? Datamation magazine. F. D. Thompson Publications, Inc. Available at: https://www.melconway.com/Home/Committees_Paper.html

Dekker, S. 2006. The Field Guide to Understanding Human Error. Ashgate Publishing Company, USA.

Edmondson, A., 1999. Psychological safety and learning behavior in work teams. Administrative science quarterly, 44(2), pp.350-383.

Garvin, David & Edmondson, Amy & Gino, Francesca. (2008). Is Yours a Learning Organization?. Harvard business review. 86. 109-16, 134.

Hochstein, L. (2019) Resilience engineering: Where do I start? Available at: https://github.com/lorin/resilience-engineering/blob/master/intro.md (Accessed: 17 November 2020).

Hollnagel, E., Woods, D. D. & Leveson, N. C. (2006). Resilience engineering: Concepts and precepts. Aldershot, UK: Ashgate.

Hollnagel, E. Resilience Engineering (2020). Available at: https://erikhollnagel.com/ideas/resilience-engineering.html (Accessed: 17 November 2020).

Provan, D.J., Woods, D.D., Dekker, S.W. and Rae, A.J., 2020. Safety II professionals: how resilience engineering can transform safety practice. Reliability Engineering & System Safety, 195, p.106740. Available at https://www.sciencedirect.com/science/article/pii/S0951832018309864

Woods, D. D. (2018). Resilience is a verb. In Trump, B. D., Florin, M.-V., & Linkov, I.

(Eds.). IRGC resource guide on resilience (vol. 2): Domains of resilience for complex interconnected systems. Lausanne, CH: EPFL International Risk Governance Center. Available on irgc.epfl.ch and irgc.org.

John Allspaw has collated an excellent book list for essential reading on resilience engineering here.